Arch is always “latest and greatest” for every package, including the kernel. It lets you tinker, and it’s always up to date. However, a rolling release introduces more ways to break your system - things start conflicting under the hood in ways that you weren’t aware of, configurations that worked don’t any longer, etc.

This is in contrast to everything built on Debian, which Mint is one example of - Mint adds a bunch of conveniences on top, but the underlying “how it all fits together” is still Debian. What Debian does is to set a target for stable releases and ship a complete set of known-stable packages. This makes it great for set and forget uses, servers that you want to just work and such. And it was very important back in the 90’s when it was hard to get Internet connectivity. But it also means that it stays behind the curve with application software releases, by periods of months to a year+. And the original workaround to that is “just add this other package repository” which, like Arch, can eventually break your system by accident.

But neither disadvantage is as much of a problem now as it used to be. More of the software is relatively stable, and the stuff you need to have the absolute latest for, you can often find as a flatpak, snap, or appimage - formats that are more self-contained and don’t rely on the dependencies that you have installed, just “download and run.”

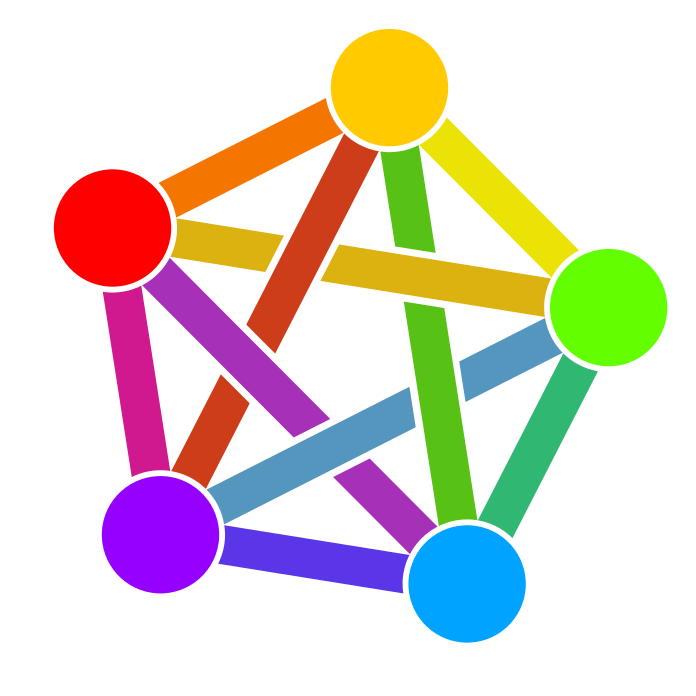

Most popular distros now are Arch or Debian flavored - same system, different veneer. Debian itself has become a better option for desktop in recent years just because of improvements to the installer.

I’ve been using Solus 4.4 lately, which has its own rolling-release package system. Less software, but the experience is tightly designed for desktop, and doesn’t push me to open terminals to do things like the more classical Unix designs that guide Arch and Debian. The problem both of those face as desktops is that they assume up-front that you may only have a terminal, so the “correct way” of doing everything tends to start and end with the terminal, and the desktop is kind of glued on and works for some things but not others.

Some of my own thoughts, which rebut the article in parts:

The author’s bio says that they have been doing this as a professional for about 5 years, which, face value, actually means that they haven’t seen the kinds of transitions that have taken place in the past and how widely game scope can vary. The way Godot does things has some wisdom-of-age in it, and even in its years as a proprietary engine(which you can learn something of by looking at Juan’s Mobygames credits the games it was shipping were aiming for the bottom of the market in scope and hardware spec: a PSP game, a Wii game, an Android game. The luxury of small scope is that you never end up in a place where optimization is some broad problem that needs to be solved globally; it’s always one specific thing that needs to be fast. Optimizing for something bigger needs production scenes to provide profiling data. It’s not something you want to approach by saying “I know what the best practice is” and immediately architecting for based on a shot in the dark. Being in a space where your engine just does the simple thing every time instead means it’s easy to make the changes needed to ship.